mirror of

https://github.com/humanlayer/12-factor-agents.git

synced 2025-08-20 18:59:53 +03:00

workshop stuff

This commit is contained in:

@@ -10,7 +10,7 @@ generator target {

|

||||

|

||||

// The version of the BAML package you have installed (e.g. same version as your baml-py or @boundaryml/baml).

|

||||

// The BAML VSCode extension version should also match this version.

|

||||

version "0.85.0"

|

||||

version "0.202.0"

|

||||

|

||||

// Valid values: "sync", "async"

|

||||

// This controls what `b.FunctionName()` will be (sync or async).

|

||||

|

||||

@@ -8,7 +8,7 @@

|

||||

"name": "my-agent",

|

||||

"version": "0.1.0",

|

||||

"dependencies": {

|

||||

"baml": "^0.0.0",

|

||||

"@boundaryml/baml": "latest",

|

||||

"tsx": "^4.15.0",

|

||||

"typescript": "^5.0.0"

|

||||

},

|

||||

@@ -19,6 +19,142 @@

|

||||

"eslint": "^8.0.0"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml/-/baml-0.202.0.tgz",

|

||||

"integrity": "sha512-0RNgCBp2egdWJfsNqNaWe/qUg6ea9OLzkcUTE8+wHmlpB2SgK5QRYTaOnt9WX4KHnUvIiMJijIOjy35RGYk45g==",

|

||||

"license": "MIT",

|

||||

"dependencies": {

|

||||

"@scarf/scarf": "^1.3.0"

|

||||

},

|

||||

"bin": {

|

||||

"baml-cli": "cli.js"

|

||||

},

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

},

|

||||

"optionalDependencies": {

|

||||

"@boundaryml/baml-darwin-arm64": "0.202.0",

|

||||

"@boundaryml/baml-darwin-x64": "0.202.0",

|

||||

"@boundaryml/baml-linux-arm64-gnu": "0.202.0",

|

||||

"@boundaryml/baml-linux-arm64-musl": "0.202.0",

|

||||

"@boundaryml/baml-linux-x64-gnu": "0.202.0",

|

||||

"@boundaryml/baml-linux-x64-musl": "0.202.0",

|

||||

"@boundaryml/baml-win32-x64-msvc": "0.202.0"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-darwin-arm64": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-darwin-arm64/-/baml-darwin-arm64-0.202.0.tgz",

|

||||

"integrity": "sha512-i0Y9tCkaWcERJL4yL1/lWSvAYzKiGMsuO1MMDFO3R3cBvbGpRlGY13hKsDtpQy7YePoGzy68MMAqQFm1Y6ucLw==",

|

||||

"cpu": [

|

||||

"arm64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"darwin"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-darwin-x64": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-darwin-x64/-/baml-darwin-x64-0.202.0.tgz",

|

||||

"integrity": "sha512-e9q/igONW33ltNUAxW6Jimv/1bucN1LgD0TqaF6gSjhyelZr4bZ68f3n5rwK0UF+4VBkNkvC+UXoWgYky5dBOg==",

|

||||

"cpu": [

|

||||

"x64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"darwin"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-linux-arm64-gnu": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-linux-arm64-gnu/-/baml-linux-arm64-gnu-0.202.0.tgz",

|

||||

"integrity": "sha512-3DWTK9gMUHv+BlsZ1BAprMXQsRzPFKhlzmG71y+G3s0ZJIFzrQ9rmdv93lejyslPPTw0M2TD2CjBDrNsnmSX3A==",

|

||||

"cpu": [

|

||||

"arm64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"linux"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-linux-arm64-musl": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-linux-arm64-musl/-/baml-linux-arm64-musl-0.202.0.tgz",

|

||||

"integrity": "sha512-fTFK+w7ku61dKzIeIaNsMLpiT793MKmj1La6oznhwpuoOdLm861GXzJUut4Bri8n4UFULfnPiCCp4nU5nwpwcQ==",

|

||||

"cpu": [

|

||||

"arm64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"linux"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-linux-x64-gnu": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-linux-x64-gnu/-/baml-linux-x64-gnu-0.202.0.tgz",

|

||||

"integrity": "sha512-gKainskhyex0c8AmzrfYSbyRXwK4OCSjpO6oKni8+EFcaH/OZD6rDqmS1ggcNoTKw2MqC/H1hfyMCw3BdEDxVA==",

|

||||

"cpu": [

|

||||

"x64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"linux"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-linux-x64-musl": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-linux-x64-musl/-/baml-linux-x64-musl-0.202.0.tgz",

|

||||

"integrity": "sha512-KHrG8iut5vc58L41eKtNF8W1OgDzYMmXRtcuevHuy22cRb4TbhYP2bTOo+r9iZOc/zBN1Yl1Cv3U+u+pX3ypPw==",

|

||||

"cpu": [

|

||||

"x64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"linux"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@boundaryml/baml-win32-x64-msvc": {

|

||||

"version": "0.202.0",

|

||||

"resolved": "https://registry.npmjs.org/@boundaryml/baml-win32-x64-msvc/-/baml-win32-x64-msvc-0.202.0.tgz",

|

||||

"integrity": "sha512-DcZiQ/eRKf11FgKFnVN8H1Tsnc6M9UgC6tLKIwr0YUYe2buKPXNkS2tPk0n4gHSnPX/bdWqyeUchk+4E6yqiDQ==",

|

||||

"cpu": [

|

||||

"x64"

|

||||

],

|

||||

"license": "MIT",

|

||||

"optional": true,

|

||||

"os": [

|

||||

"win32"

|

||||

],

|

||||

"engines": {

|

||||

"node": ">= 10"

|

||||

}

|

||||

},

|

||||

"node_modules/@esbuild/aix-ppc64": {

|

||||

"version": "0.25.4",

|

||||

"resolved": "https://registry.npmjs.org/@esbuild/aix-ppc64/-/aix-ppc64-0.25.4.tgz",

|

||||

@@ -606,6 +742,13 @@

|

||||

"node": ">= 8"

|

||||

}

|

||||

},

|

||||

"node_modules/@scarf/scarf": {

|

||||

"version": "1.4.0",

|

||||

"resolved": "https://registry.npmjs.org/@scarf/scarf/-/scarf-1.4.0.tgz",

|

||||

"integrity": "sha512-xxeapPiUXdZAE3che6f3xogoJPeZgig6omHEy1rIY5WVsB3H2BHNnZH+gHG6x91SCWyQCzWGsuL2Hh3ClO5/qQ==",

|

||||

"hasInstallScript": true,

|

||||

"license": "Apache-2.0"

|

||||

},

|

||||

"node_modules/@types/json-schema": {

|

||||

"version": "7.0.15",

|

||||

"resolved": "https://registry.npmjs.org/@types/json-schema/-/json-schema-7.0.15.tgz",

|

||||

@@ -925,11 +1068,6 @@

|

||||

"dev": true,

|

||||

"license": "MIT"

|

||||

},

|

||||

"node_modules/baml": {

|

||||

"version": "0.0.0",

|

||||

"resolved": "https://registry.npmjs.org/baml/-/baml-0.0.0.tgz",

|

||||

"integrity": "sha512-wlrNMVNrHKoB65HXhjTD8mFLWQZVaapWl35gHB+wrp4Sx1+zm5U32LJ2cgYV+1/UPBVC198E5PXJdwYNf2JFKg=="

|

||||

},

|

||||

"node_modules/brace-expansion": {

|

||||

"version": "2.0.1",

|

||||

"resolved": "https://registry.npmjs.org/brace-expansion/-/brace-expansion-2.0.1.tgz",

|

||||

|

||||

@@ -7,7 +7,7 @@

|

||||

"build": "tsc"

|

||||

},

|

||||

"dependencies": {

|

||||

"baml": "^0.0.0",

|

||||

"@boundaryml/baml": "latest",

|

||||

"tsx": "^4.15.0",

|

||||

"typescript": "^5.0.0"

|

||||

},

|

||||

|

||||

@@ -1,9 +1,9 @@

|

||||

# Workshop 2025-07-16: Python/Jupyter Notebook Implementation

|

||||

|

||||

• **Main Tool**: `hack/walkthroughgen_py.py` - Converts TypeScript walkthrough to Jupyter notebooks

|

||||

• **Config**: `hack/walkthrough_python.yaml` - Defines notebook structure and content

|

||||

• **Output**: `hack/workshop_final.ipynb` - Generated notebook with Chapters 0-7

|

||||

• **Testing**: `hack/test_notebook_colab_sim.sh` - Simulates Google Colab environment

|

||||

• **Main Tool**: `walkthroughgen_py.py` - Converts TypeScript walkthrough to Jupyter notebooks

|

||||

• **Config**: `walkthrough.yaml` - Defines notebook structure and content

|

||||

• **Output**: `workshop_final.ipynb` - Generated notebook with Chapters 0-7

|

||||

• **Testing**: `test_notebook_colab_sim.sh` - Simulates Google Colab environment

|

||||

|

||||

## Key Implementation Learnings

|

||||

|

||||

@@ -53,15 +53,15 @@

|

||||

|

||||

## Testing Commands

|

||||

|

||||

• Generate notebook: `uv run python hack/walkthroughgen_py.py hack/walkthrough_python.yaml -o hack/test.ipynb`

|

||||

• Full Colab sim: `cd hack && ./test_notebook_colab_sim.sh`

|

||||

• Generate notebook: `uv run python walkthroughgen_py.py walkthrough.yaml -o test.ipynb`

|

||||

• Full Colab sim: `./test_notebook_colab_sim.sh`

|

||||

• Run BAML tests: `baml-cli test` (from directory with baml_src)

|

||||

|

||||

## File Structure

|

||||

|

||||

• `walkthrough/*.py` - Python implementations of each chapter's code

|

||||

• `walkthrough/*.baml` - BAML files fetched from GitHub during notebook execution

|

||||

• `hack/walkthroughgen_py.py` - Main conversion tool

|

||||

• `hack/walkthrough_python.yaml` - Notebook definition with all chapters

|

||||

• `hack/test_notebook_colab_sim.sh` - Full Colab environment simulation

|

||||

• `hack/workshop_final.ipynb` - Final generated notebook ready for workshop

|

||||

• `walkthroughgen_py.py` - Main conversion tool

|

||||

• `walkthrough.yaml` - Notebook definition with all chapters

|

||||

• `test_notebook_colab_sim.sh` - Full Colab environment simulation

|

||||

• `workshop_final.ipynb` - Final generated notebook ready for workshop

|

||||

|

||||

71

workshops/2025-07-16/hack/analyze_log_capture.py

Normal file

71

workshops/2025-07-16/hack/analyze_log_capture.py

Normal file

@@ -0,0 +1,71 @@

|

||||

#!/usr/bin/env python3

|

||||

"""

|

||||

Analyze notebook for BAML log capture success/failure

|

||||

"""

|

||||

import json

|

||||

import sys

|

||||

import os

|

||||

|

||||

def check_logs(notebook_path):

|

||||

"""Check if BAML logs were captured in the notebook"""

|

||||

|

||||

if not os.path.exists(notebook_path):

|

||||

print(f"❌ Notebook not found: {notebook_path}")

|

||||

return False, False

|

||||

|

||||

with open(notebook_path) as f:

|

||||

nb = json.load(f)

|

||||

|

||||

found_log_pattern = False

|

||||

found_capture_test = False

|

||||

|

||||

for i, cell in enumerate(nb['cells']):

|

||||

if cell['cell_type'] == 'code' and 'outputs' in cell:

|

||||

# Check if this is a log capture test cell

|

||||

source = ''.join(cell.get('source', []))

|

||||

if 'run_with_baml_logs' in source:

|

||||

found_capture_test = True

|

||||

print(f'Found log capture test in cell {i}')

|

||||

|

||||

# Check outputs for BAML logs

|

||||

for output in cell['outputs']:

|

||||

if output.get('output_type') == 'stream' and 'text' in output:

|

||||

text = ''.join(output['text'])

|

||||

# Look for the specific BAML log pattern

|

||||

if '---Parsed Response (class DoneForNow)---' in text:

|

||||

found_log_pattern = True

|

||||

print(f'✅ FOUND BAML LOG PATTERN in cell {i} output!')

|

||||

log_lines = [line for line in text.split('\n') if 'Parsed Response' in line]

|

||||

if log_lines:

|

||||

print(f'Log excerpt: {log_lines[0]}')

|

||||

|

||||

# Also check for our test markers

|

||||

if 'Captured BAML Logs' in text:

|

||||

print(f'Found "Captured BAML Logs" section in cell {i}')

|

||||

if 'No BAML Logs Captured' in text:

|

||||

print(f'Found "No BAML Logs Captured" section in cell {i}')

|

||||

|

||||

return found_capture_test, found_log_pattern

|

||||

|

||||

def main():

|

||||

if len(sys.argv) != 2:

|

||||

print("Usage: python analyze_log_capture.py <notebook_path>")

|

||||

sys.exit(1)

|

||||

|

||||

notebook_path = sys.argv[1]

|

||||

capture_test_found, log_pattern_found = check_logs(notebook_path)

|

||||

|

||||

if not capture_test_found:

|

||||

print('❌ FAIL: No log capture test found in notebook')

|

||||

sys.exit(1)

|

||||

|

||||

if log_pattern_found:

|

||||

print('✅ PASS: BAML logs successfully captured in notebook output!')

|

||||

sys.exit(0)

|

||||

else:

|

||||

print('❌ FAIL: BAML log pattern not found in captured output')

|

||||

print('This means the log capture method is NOT working')

|

||||

sys.exit(1)

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

87

workshops/2025-07-16/hack/inspect_notebook.py

Normal file

87

workshops/2025-07-16/hack/inspect_notebook.py

Normal file

@@ -0,0 +1,87 @@

|

||||

#!/usr/bin/env python3

|

||||

"""

|

||||

Utility to inspect notebook cell outputs for debugging

|

||||

"""

|

||||

import json

|

||||

import sys

|

||||

import os

|

||||

|

||||

def inspect_notebook(notebook_path, filter_keyword=None):

|

||||

"""Inspect notebook cells and outputs"""

|

||||

|

||||

if not os.path.exists(notebook_path):

|

||||

print(f"❌ Notebook not found: {notebook_path}")

|

||||

return

|

||||

|

||||

with open(notebook_path) as f:

|

||||

nb = json.load(f)

|

||||

|

||||

print(f"📓 Inspecting notebook: {notebook_path}")

|

||||

print(f"📊 Total cells: {len(nb['cells'])}")

|

||||

print("=" * 60)

|

||||

|

||||

for i, cell in enumerate(nb['cells']):

|

||||

if cell['cell_type'] == 'code':

|

||||

source = ''.join(cell.get('source', []))

|

||||

|

||||

# Filter by keyword if provided

|

||||

if filter_keyword and filter_keyword.lower() not in source.lower():

|

||||

continue

|

||||

|

||||

print(f"\n🔍 CELL {i} ({'code'})")

|

||||

print("📝 SOURCE:")

|

||||

print(source[:300] + "..." if len(source) > 300 else source)

|

||||

|

||||

if 'outputs' in cell and cell['outputs']:

|

||||

print(f"\n📤 OUTPUTS ({len(cell['outputs'])} outputs):")

|

||||

for j, output in enumerate(cell['outputs']):

|

||||

output_type = output.get('output_type', 'unknown')

|

||||

print(f" Output {j}: type={output_type}")

|

||||

|

||||

if 'text' in output:

|

||||

text = ''.join(output['text'])

|

||||

print(f" Text length: {len(text)} chars")

|

||||

|

||||

# Show first few lines for context

|

||||

lines = text.split('\n')[:5]

|

||||

for line in lines:

|

||||

if line.strip():

|

||||

print(f" > {line[:80]}...")

|

||||

|

||||

# Check for interesting patterns

|

||||

patterns = ['BAML', 'Parsed', 'Response', 'Error', 'Exception']

|

||||

found_patterns = [p for p in patterns if p in text]

|

||||

if found_patterns:

|

||||

print(f" 🎯 Found patterns: {found_patterns}")

|

||||

|

||||

elif 'data' in output:

|

||||

data_keys = list(output['data'].keys())

|

||||

print(f" Data keys: {data_keys}")

|

||||

|

||||

# Check for execution errors

|

||||

if output_type == 'error':

|

||||

print(f" ❌ ERROR: {output.get('ename', 'Unknown')}")

|

||||

print(f" 💬 Message: {output.get('evalue', 'No message')}")

|

||||

if 'traceback' in output:

|

||||

print(f" 📍 Traceback: {len(output['traceback'])} lines")

|

||||

# Show last few lines of traceback

|

||||

for line in output['traceback'][-3:]:

|

||||

print(f" 🔍 {line.strip()}")

|

||||

|

||||

else:

|

||||

print("\n📤 No outputs")

|

||||

|

||||

print("-" * 40)

|

||||

|

||||

def main():

|

||||

if len(sys.argv) < 2:

|

||||

print("Usage: python inspect_notebook.py <notebook_path> [filter_keyword]")

|

||||

sys.exit(1)

|

||||

|

||||

notebook_path = sys.argv[1]

|

||||

filter_keyword = sys.argv[2] if len(sys.argv) > 2 else None

|

||||

|

||||

inspect_notebook(notebook_path, filter_keyword)

|

||||

|

||||

if __name__ == '__main__':

|

||||

main()

|

||||

31

workshops/2025-07-16/hack/minimal_test.ipynb

Normal file

31

workshops/2025-07-16/hack/minimal_test.ipynb

Normal file

@@ -0,0 +1,31 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"import sys\n",

|

||||

"print(\"Hello stdout!\")\n",

|

||||

"print(\"Hello stderr!\", file=sys.stderr)\n",

|

||||

"with open(\"test_output.txt\", \"w\") as f:\n",

|

||||

" f.write(\"Notebook executed successfully!\\n\")\n",

|

||||

"print(\"✅ Test complete\")"

|

||||

]

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3",

|

||||

"language": "python",

|

||||

"name": "python3"

|

||||

},

|

||||

"language_info": {

|

||||

"name": "python",

|

||||

"version": "3.8.0"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 4

|

||||

}

|

||||

35

workshops/2025-07-16/hack/test_log_capture.sh

Executable file

35

workshops/2025-07-16/hack/test_log_capture.sh

Executable file

@@ -0,0 +1,35 @@

|

||||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

echo "🧪 Testing BAML Log Capture..."

|

||||

|

||||

# Clean up any previous test

|

||||

rm -f test_capture.ipynb

|

||||

rm -rf tmp/test_capture_*

|

||||

|

||||

# Generate test notebook

|

||||

echo "📝 Generating test notebook..."

|

||||

uv run python walkthroughgen_py.py simple_log_test.yaml -o test_capture.ipynb

|

||||

|

||||

# Run in sim

|

||||

echo "🚀 Running test in sim..."

|

||||

./test_notebook_colab_sim.sh test_capture.ipynb > /dev/null 2>&1

|

||||

|

||||

# Find the executed notebook in the timestamped directory

|

||||

NOTEBOOK_DIR=$(ls -1dt tmp/test_* | head -1)

|

||||

NOTEBOOK_PATH="$NOTEBOOK_DIR/test_notebook.ipynb"

|

||||

|

||||

echo "📋 Analyzing results from $NOTEBOOK_PATH..."

|

||||

|

||||

# First dump debug info

|

||||

echo "🔍 Dumping debug info..."

|

||||

python3 inspect_notebook.py "$NOTEBOOK_PATH" "run_with_baml_logs"

|

||||

|

||||

echo ""

|

||||

echo "📊 Running log capture analysis..."

|

||||

|

||||

# Check for BAML log patterns in the executed notebook

|

||||

python3 analyze_log_capture.py "$NOTEBOOK_PATH"

|

||||

|

||||

echo "🧹 Cleaning up..."

|

||||

rm -f test_capture.ipynb

|

||||

426

workshops/2025-07-16/hack/testing.md

Normal file

426

workshops/2025-07-16/hack/testing.md

Normal file

@@ -0,0 +1,426 @@

|

||||

# Jupyter Notebook Testing Framework

|

||||

|

||||

This document describes the general testing framework for validating any functionality in Jupyter notebooks, with a specific example of testing BAML log capture.

|

||||

|

||||

## General Framework

|

||||

|

||||

### Overview

|

||||

|

||||

The testing framework provides a complete iteration loop for testing notebook implementations:

|

||||

|

||||

1. **Generate** test notebooks with specific functionality

|

||||

2. **Execute** notebooks in a simulated Google Colab environment

|

||||

3. **Analyze** executed notebooks for expected outputs and behaviors

|

||||

4. **Report** clear pass/fail results

|

||||

|

||||

### Core Components

|

||||

|

||||

#### Notebook Simulator (`test_notebook_colab_sim.sh`)

|

||||

|

||||

The simulation script creates a realistic Google Colab environment for any notebook:

|

||||

|

||||

**Environment Setup:**

|

||||

- Creates timestamped test directory: `./tmp/test_YYYYMMDD_HHMMSS/`

|

||||

- Sets up fresh Python virtual environment

|

||||

- Installs Jupyter dependencies (`notebook`, `nbconvert`, `ipykernel`)

|

||||

|

||||

**Notebook Execution:**

|

||||

- Copies test notebook to clean environment

|

||||

- Uses `ExecutePreprocessor` to run all cells (simulates Colab execution)

|

||||

- **Critical:** Activates virtual environment before execution

|

||||

- **Critical:** Saves executed notebook with cell outputs back to disk

|

||||

|

||||

**Usage:**

|

||||

```bash

|

||||

./test_notebook_colab_sim.sh your_notebook.ipynb

|

||||

```

|

||||

|

||||

The simulator will:

|

||||

- Execute all cells in the notebook

|

||||

- Preserve the test directory for inspection

|

||||

- Show final directory structure

|

||||

- Report success/failure

|

||||

|

||||

#### Output Inspector (`inspect_notebook.py`)

|

||||

|

||||

Debug utility for examining notebook cell outputs in detail:

|

||||

|

||||

**Features:**

|

||||

- Shows cell source code and execution counts

|

||||

- Displays all output types (stream, execute_result, error)

|

||||

- Highlights patterns in output text

|

||||

- Shows execution errors with tracebacks

|

||||

- Filters cells by keywords for focused debugging

|

||||

|

||||

**Usage:**

|

||||

```bash

|

||||

# Inspect all cells

|

||||

python3 inspect_notebook.py path/to/notebook.ipynb

|

||||

|

||||

# Filter for specific content

|

||||

python3 inspect_notebook.py path/to/notebook.ipynb "keyword"

|

||||

|

||||

# Look for errors

|

||||

python3 inspect_notebook.py path/to/notebook.ipynb "error"

|

||||

```

|

||||

|

||||

**Sample Output:**

|

||||

```

|

||||

🔍 CELL 0 (code)

|

||||

📝 SOURCE:

|

||||

import sys

|

||||

print("Hello!")

|

||||

print("Error!", file=sys.stderr)

|

||||

|

||||

📤 OUTPUTS (2 outputs):

|

||||

Output 0: type=stream

|

||||

Text length: 7 chars

|

||||

> Hello!...

|

||||

Output 1: type=stream

|

||||

Text length: 7 chars

|

||||

> Error!...

|

||||

🎯 Found patterns: ['Error']

|

||||

```

|

||||

|

||||

### Key Insights for Notebook Testing

|

||||

|

||||

#### Execution Environment

|

||||

1. **Virtual environment activation is critical** - Without it, execution fails silently

|

||||

2. **Output persistence must be explicit** - `ExecutePreprocessor` only modifies notebook in memory

|

||||

3. **Check execution counts** - `execution_count=None` means cell never executed

|

||||

4. **Handle different output types** - stream, execute_result, error, display_data

|

||||

|

||||

#### Common Debugging Steps

|

||||

1. **Verify basic execution:**

|

||||

```bash

|

||||

python3 -c "

|

||||

import json

|

||||

nb = json.load(open('path/to/notebook.ipynb'))

|

||||

print('Execution counts:', [cell.get('execution_count') for cell in nb['cells'] if cell['cell_type']=='code'])

|

||||

"

|

||||

```

|

||||

|

||||

2. **Check for execution errors:**

|

||||

```bash

|

||||

python3 inspect_notebook.py path/to/notebook.ipynb "error"

|

||||

```

|

||||

|

||||

3. **Look for specific output patterns:**

|

||||

```bash

|

||||

python3 inspect_notebook.py path/to/notebook.ipynb "your_pattern"

|

||||

```

|

||||

|

||||

### Creating Custom Tests

|

||||

|

||||

#### 1. Minimal Test Template

|

||||

|

||||

Create a simple notebook that tests basic functionality:

|

||||

|

||||

```json

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# Test basic execution\n",

|

||||

"print('Hello from notebook!')\n",

|

||||

"\n",

|

||||

"# Test file creation\n",

|

||||

"with open('test.txt', 'w') as f:\n",

|

||||

" f.write('Test successful\\n')\n",

|

||||

"\n",

|

||||

"# Test error handling\n",

|

||||

"try:\n",

|

||||

" result = your_function_to_test()\n",

|

||||

" print(f'Result: {result}')\n",

|

||||

"except Exception as e:\n",

|

||||

" print(f'Error: {e}')"

|

||||

]

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3",

|

||||

"language": "python",

|

||||

"name": "python3"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 4

|

||||

}

|

||||

```

|

||||

|

||||

#### 2. Test Script Template

|

||||

|

||||

```bash

|

||||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

echo "🧪 Testing [Your Feature]..."

|

||||

|

||||

# Clean up any previous test

|

||||

rm -f test_notebook.ipynb

|

||||

|

||||

# Generate or copy your test notebook

|

||||

cp your_test_notebook.ipynb test_notebook.ipynb

|

||||

|

||||

# Run in simulator

|

||||

echo "🚀 Running test in sim..."

|

||||

./test_notebook_colab_sim.sh test_notebook.ipynb

|

||||

|

||||

# Find the executed notebook

|

||||

NOTEBOOK_DIR=$(ls -1dt tmp/test_* | head -1)

|

||||

NOTEBOOK_PATH="$NOTEBOOK_DIR/test_notebook.ipynb"

|

||||

|

||||

# Analyze results

|

||||

echo "📋 Analyzing results..."

|

||||

python3 inspect_notebook.py "$NOTEBOOK_PATH" "your_search_term"

|

||||

|

||||

# Add your custom analysis

|

||||

python3 -c "

|

||||

import json

|

||||

with open('$NOTEBOOK_PATH') as f:

|

||||

nb = json.load(f)

|

||||

|

||||

# Your custom analysis logic here

|

||||

success = check_for_expected_outputs(nb)

|

||||

|

||||

if success:

|

||||

print('✅ PASS: Test succeeded!')

|

||||

else:

|

||||

print('❌ FAIL: Test failed!')

|

||||

exit(1)

|

||||

"

|

||||

|

||||

echo "🧹 Cleaning up..."

|

||||

rm -f test_notebook.ipynb

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Use Case: BAML Log Capture Testing

|

||||

|

||||

This section demonstrates how to use the general framework for a specific use case: testing BAML log capture in notebooks.

|

||||

|

||||

### Problem Statement

|

||||

|

||||

BAML (a language model framework) uses FFI bindings to a Rust binary and outputs logs to stderr. We need to test whether different log capture methods can successfully capture these logs in Jupyter notebook cells.

|

||||

|

||||

### Test Implementation

|

||||

|

||||

#### Test Configuration (`simple_log_test.yaml`)

|

||||

|

||||

```yaml

|

||||

title: "BAML Log Capture Test"

|

||||

text: "Simple test for log capture"

|

||||

|

||||

sections:

|

||||

- title: "Log Capture Test"

|

||||

steps:

|

||||

- baml_setup: true

|

||||

- fetch_file:

|

||||

src: "walkthrough/01-agent.baml"

|

||||

dest: "baml_src/agent.baml"

|

||||

- file:

|

||||

src: "./simple_main.py"

|

||||

- text: "Testing log capture with show_logs=true:"

|

||||

- run_main:

|

||||

args: "What is 2+2?"

|

||||

show_logs: true

|

||||

```

|

||||

|

||||

#### Test Function (`simple_main.py`)

|

||||

|

||||

```python

|

||||

def main(message="What is 2+2?"):

|

||||

"""Simple main function that calls BAML directly"""

|

||||

client = get_baml_client()

|

||||

|

||||

# Call the BAML function - this should generate logs

|

||||

result = client.DetermineNextStep(f"User asked: {message}")

|

||||

|

||||

print(f"Input: {message}")

|

||||

print(f"Result: {result}")

|

||||

return result

|

||||

```

|

||||

|

||||

#### Log Capture Implementation

|

||||

|

||||

The current working implementation in `walkthroughgen_py.py`:

|

||||

|

||||

```python

|

||||

def run_with_baml_logs(func, *args, **kwargs):

|

||||

"""Test log capture using IPython capture_output"""

|

||||

# Ensure BAML_LOG is set

|

||||

if 'BAML_LOG' not in os.environ:

|

||||

os.environ['BAML_LOG'] = 'info'

|

||||

|

||||

print(f"[LOG CAPTURE TEST] Running with BAML_LOG={os.environ.get('BAML_LOG')}...")

|

||||

|

||||

# Capture both stdout and stderr

|

||||

with capture_output() as captured:

|

||||

result = func(*args, **kwargs)

|

||||

|

||||

# Display captured outputs

|

||||

if captured.stdout:

|

||||

print("=== Captured Stdout ===")

|

||||

print(captured.stdout)

|

||||

|

||||

if captured.stderr:

|

||||

print("=== Captured BAML Logs ===")

|

||||

print(captured.stderr)

|

||||

else:

|

||||

print("=== No BAML Logs Captured ===")

|

||||

|

||||

print("=== Function Result ===")

|

||||

print(result)

|

||||

|

||||

return result

|

||||

```

|

||||

|

||||

### Test Execution

|

||||

|

||||

#### Main Test Script (`test_log_capture.sh`)

|

||||

|

||||

```bash

|

||||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

echo "🧪 Testing BAML Log Capture..."

|

||||

|

||||

# Generate test notebook from YAML config

|

||||

echo "📝 Generating test notebook..."

|

||||

uv run python walkthroughgen_py.py simple_log_test.yaml -o test_capture.ipynb

|

||||

|

||||

# Run in simulator

|

||||

echo "🚀 Running test in sim..."

|

||||

./test_notebook_colab_sim.sh test_capture.ipynb

|

||||

|

||||

# Find the executed notebook

|

||||

NOTEBOOK_DIR=$(ls -1dt tmp/test_* | head -1)

|

||||

NOTEBOOK_PATH="$NOTEBOOK_DIR/test_notebook.ipynb"

|

||||

|

||||

echo "📋 Analyzing results from $NOTEBOOK_PATH..."

|

||||

|

||||

# Debug output

|

||||

echo "🔍 Dumping debug info..."

|

||||

python3 inspect_notebook.py "$NOTEBOOK_PATH" "run_with_baml_logs"

|

||||

|

||||

# Analyze for BAML log patterns

|

||||

echo "📊 Running log capture analysis..."

|

||||

python3 analyze_log_capture.py "$NOTEBOOK_PATH"

|

||||

|

||||

echo "🧹 Cleaning up..."

|

||||

rm -f test_capture.ipynb

|

||||

```

|

||||

|

||||

#### Analysis Script (`analyze_log_capture.py`)

|

||||

|

||||

```python

|

||||

#!/usr/bin/env python3

|

||||

import json

|

||||

import sys

|

||||

import os

|

||||

|

||||

def check_logs(notebook_path):

|

||||

"""Check if BAML logs were captured in the notebook"""

|

||||

|

||||

with open(notebook_path) as f:

|

||||

nb = json.load(f)

|

||||

|

||||

found_log_pattern = False

|

||||

found_capture_test = False

|

||||

|

||||

for i, cell in enumerate(nb['cells']):

|

||||

if cell['cell_type'] == 'code' and 'outputs' in cell:

|

||||

source = ''.join(cell.get('source', []))

|

||||

if 'run_with_baml_logs' in source:

|

||||

found_capture_test = True

|

||||

print(f'Found log capture test in cell {i}')

|

||||

|

||||

# Check outputs for BAML logs

|

||||

for output in cell['outputs']:

|

||||

if output.get('output_type') == 'stream' and 'text' in output:

|

||||

text = ''.join(output['text'])

|

||||

# Look for the specific BAML log pattern

|

||||

if '---Parsed Response (class DoneForNow)---' in text:

|

||||

found_log_pattern = True

|

||||

print(f'✅ FOUND BAML LOG PATTERN in cell {i} output!')

|

||||

|

||||

return found_capture_test, found_log_pattern

|

||||

|

||||

# Run analysis and return pass/fail

|

||||

capture_test_found, log_pattern_found = check_logs(sys.argv[1])

|

||||

|

||||

if not capture_test_found:

|

||||

print('❌ FAIL: No log capture test found in notebook')

|

||||

sys.exit(1)

|

||||

|

||||

if log_pattern_found:

|

||||

print('✅ PASS: BAML logs successfully captured in notebook output!')

|

||||

sys.exit(0)

|

||||

else:

|

||||

print('❌ FAIL: BAML log pattern not found in captured output')

|

||||

sys.exit(1)

|

||||

```

|

||||

|

||||

### Expected Output Flow

|

||||

|

||||

#### Successful Test Run:

|

||||

```bash

|

||||

$ ./test_log_capture.sh

|

||||

|

||||

🧪 Testing BAML Log Capture...

|

||||

📝 Generating test notebook...

|

||||

Generated notebook: test_capture.ipynb

|

||||

🚀 Running test in sim...

|

||||

🧪 Creating clean test environment in: ./tmp/test_20250716_191106

|

||||

📁 Test directory will be preserved for inspection

|

||||

🐍 Creating fresh Python virtual environment...

|

||||

📦 Installing Jupyter dependencies...

|

||||

🏃 Running notebook in clean environment...

|

||||

✅ Notebook executed successfully!

|

||||

💾 Executed notebook saved with outputs

|

||||

|

||||

📋 Analyzing results from tmp/test_20250716_191106/test_notebook.ipynb...

|

||||

🔍 Dumping debug info...

|

||||

Found log capture test in cell 11

|

||||

|

||||

📤 OUTPUTS (3 outputs):

|

||||

Output 0: type=stream

|

||||

Text length: 49 chars

|

||||

> [LOG CAPTURE TEST] Running with BAML_LOG=info......

|

||||

Output 1: type=stream

|

||||

Text length: 1272 chars

|

||||

> 2025-07-16T19:11:22.445 [BAML [92mINFO[0m] [35mFunction DetermineNextStep[0m...

|

||||

🎯 Found patterns: ['BAML', 'Parsed', 'Response']

|

||||

|

||||

📊 Running log capture analysis...

|

||||

Found log capture test in cell 11

|

||||

✅ FOUND BAML LOG PATTERN in cell 11 output!

|

||||

✅ PASS: BAML logs successfully captured in notebook output!

|

||||

🧹 Cleaning up...

|

||||

```

|

||||

|

||||

### Key BAML-Specific Insights

|

||||

|

||||

1. **BAML logs go to stderr** - Due to FFI bindings to Rust binary

|

||||

2. **Requires `BAML_LOG=info`** - Environment variable controls verbosity

|

||||

3. **Logs include ANSI color codes** - Need to handle terminal formatting

|

||||

4. **Pattern matching** - Look for `---Parsed Response (class DoneForNow)---` to confirm successful execution

|

||||

5. **IPython capture_output() works** - Successfully captures stderr in notebook context

|

||||

|

||||

### Iteration Loop Benefits

|

||||

|

||||

This framework enables rapid testing of different log capture approaches:

|

||||

|

||||

1. **Modify** the `run_with_baml_logs` function in `walkthroughgen_py.py`

|

||||

2. **Run** `./test_log_capture.sh`

|

||||

3. **Get** immediate pass/fail feedback

|

||||

4. **Debug** with `inspect_notebook.py` if needed

|

||||

5. **Repeat** until working implementation found

|

||||

|

||||

This same pattern can be applied to test any notebook functionality: library integrations, environment setup, output formatting, error handling, etc.

|

||||

@@ -64,6 +64,11 @@ try:

|

||||

ep.preprocess(nb, {'metadata': {'path': '.'}})

|

||||

print("\n✅ Notebook executed successfully!")

|

||||

|

||||

# Save the executed notebook back to disk

|

||||

with open('test_notebook.ipynb', 'w') as f:

|

||||

nbformat.write(nb, f)

|

||||

print("💾 Executed notebook saved with outputs")

|

||||

|

||||

# Show final directory structure

|

||||

print("\n📁 Final directory structure:")

|

||||

for root, dirs, files in os.walk('.'):

|

||||

@@ -85,7 +90,7 @@ EOF

|

||||

|

||||

# Run the notebook

|

||||

echo "🏃 Running notebook in clean environment..."

|

||||

python run_notebook.py

|

||||

source venv/bin/activate && python run_notebook.py

|

||||

|

||||

# Check what BAML files were created

|

||||

echo -e "\n📄 BAML files created:"

|

||||

|

||||

@@ -16,6 +16,14 @@ sections:

|

||||

|

||||

For this notebook, you'll need to have your OpenAI API key saved in Google Colab secrets.

|

||||

|

||||

## Where We're Headed

|

||||

|

||||

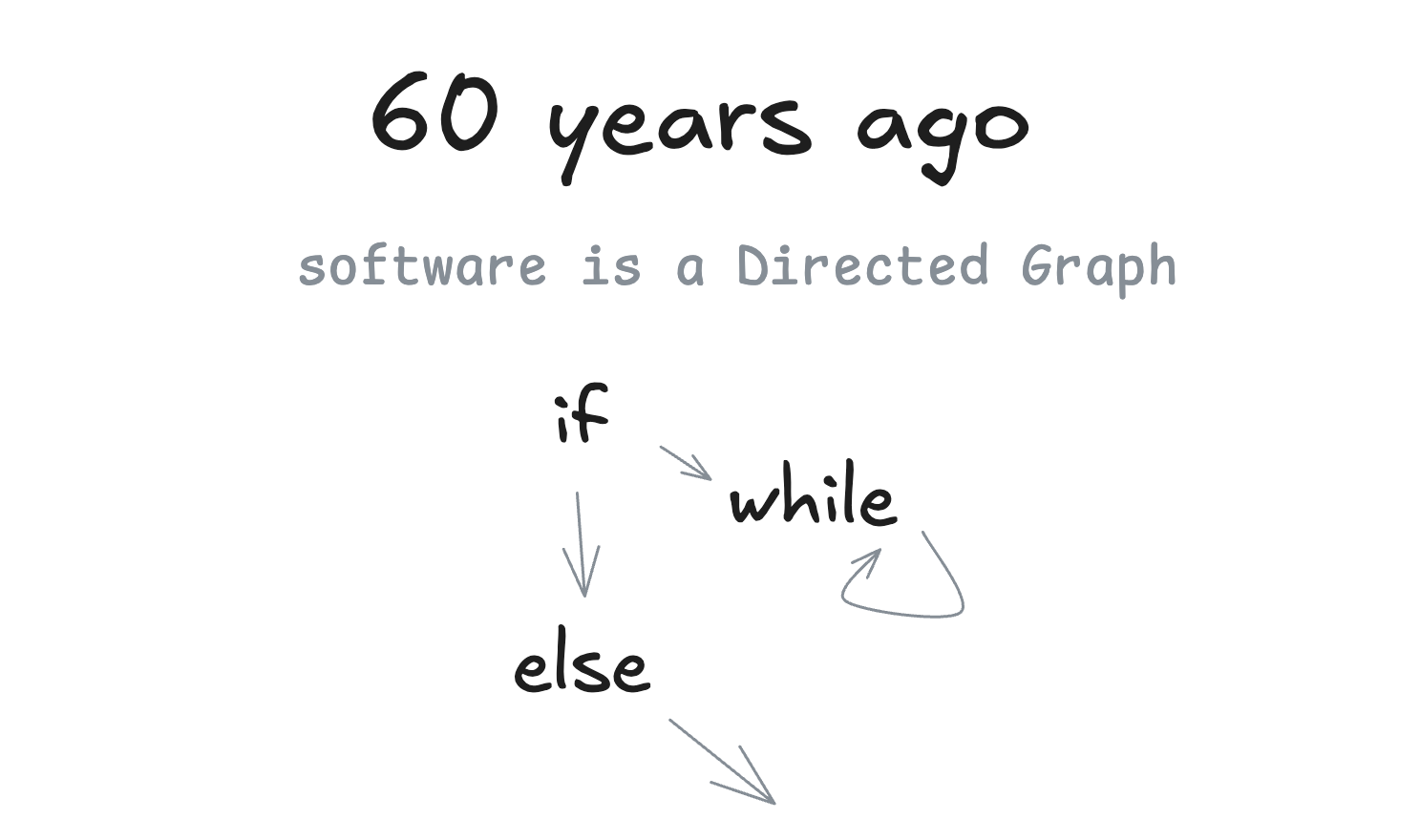

Before we dive in, let's understand the journey ahead. We're building toward **micro-agents in deterministic DAGs** - a powerful pattern that combines the flexibility of AI with the reliability of traditional software.

|

||||

|

||||

📖 **Learn more**: [A Brief History of Software](https://github.com/humanlayer/12-factor-agents/blob/main/content/brief-history-of-software.md)

|

||||

|

||||

|

||||

|

||||

- text: "Here's our simple hello world program:"

|

||||

- file: {src: ./walkthrough/00-main.py}

|

||||

- text: "Let's run it to verify it works:"

|

||||

@@ -55,6 +63,14 @@ sections:

|

||||

|

||||

BAML works much better in VS Code with their official extension, which provides syntax highlighting, autocomplete, inline testing, and an interactive playground. However, for this notebook tutorial, we'll work with BAML files directly without the enhanced IDE features.

|

||||

|

||||

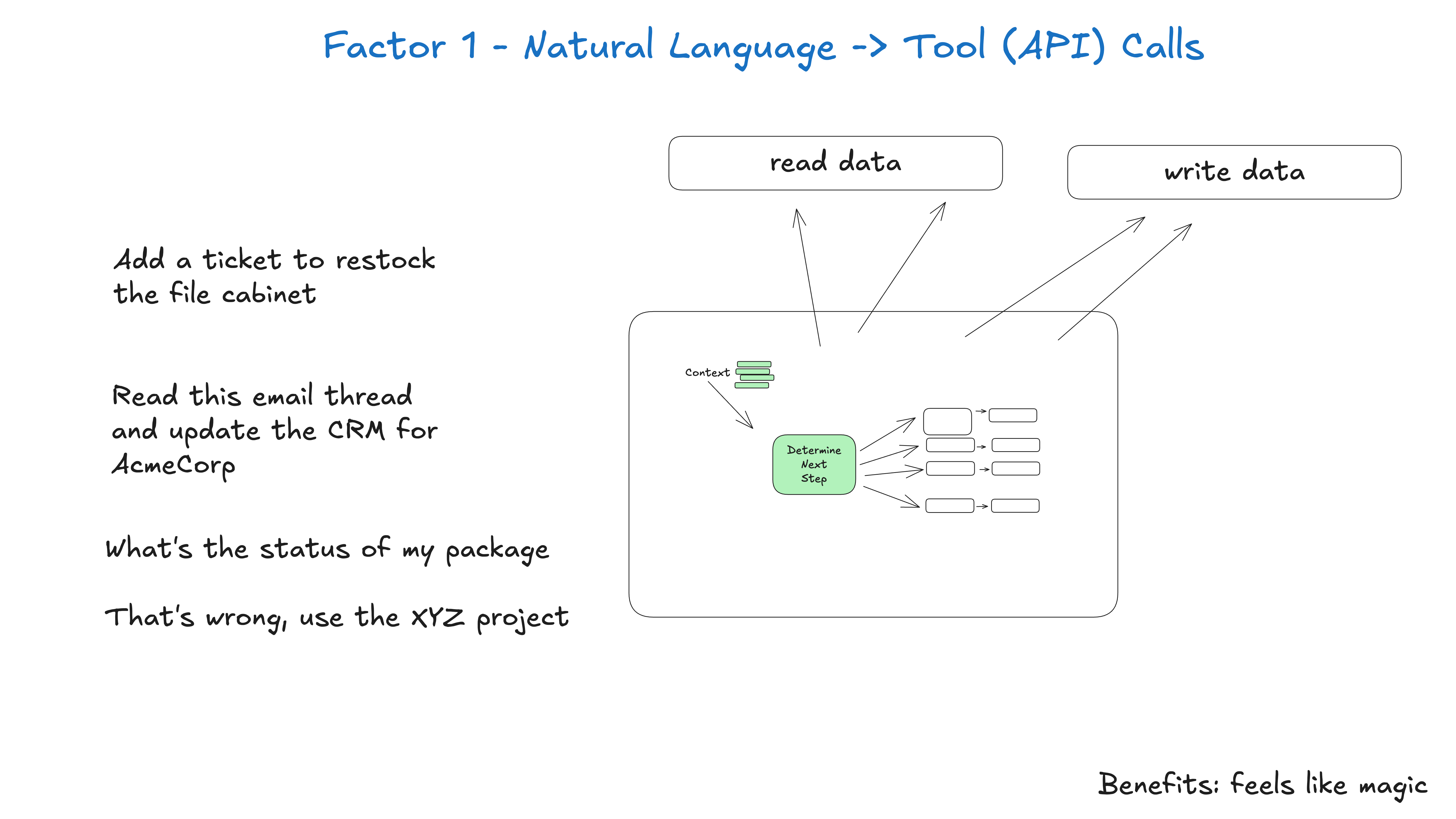

## Factor 1: Natural Language to Tool Calls

|

||||

|

||||

What we're building implements the first factor of 12-factor agents - converting natural language into structured tool calls.

|

||||

|

||||

📖 **Learn more**: [Factor 1: Natural Language to Tool Calls](https://github.com/humanlayer/12-factor-agents/blob/main/content/factor-01-natural-language-to-tool-calls.md)

|

||||

|

||||

|

||||

|

||||

First, let's set up BAML support in our notebook.

|

||||

- baml_setup: true

|

||||

- command: "!ls baml_src"

|

||||

@@ -91,12 +107,6 @@ sections:

|

||||

is done automatically by the get_baml_client() function

|

||||

|

||||

- run_main: {regenerate_baml: true, args: "Hello from the Python notebook!"}

|

||||

- text: |

|

||||

In a few cases, we'll enable the baml debug logs to see the inputs/outputs to and from the model.

|

||||

- run_main: {regenerate_baml: false, args: "Hello from the Python notebook!", show_logs: true}

|

||||

- text: |

|

||||

what's most important there is that you can see the prompt and how the output_format is injected

|

||||

to tell the model what kind of json we want to return.

|

||||

|

||||

- name: calculator-tools

|

||||

title: "Chapter 2 - Add Calculator Tools"

|

||||

@@ -108,6 +118,14 @@ sections:

|

||||

These are simple structured outputs that we'll ask the model to

|

||||

return as a "next step" in the agentic loop.

|

||||

|

||||

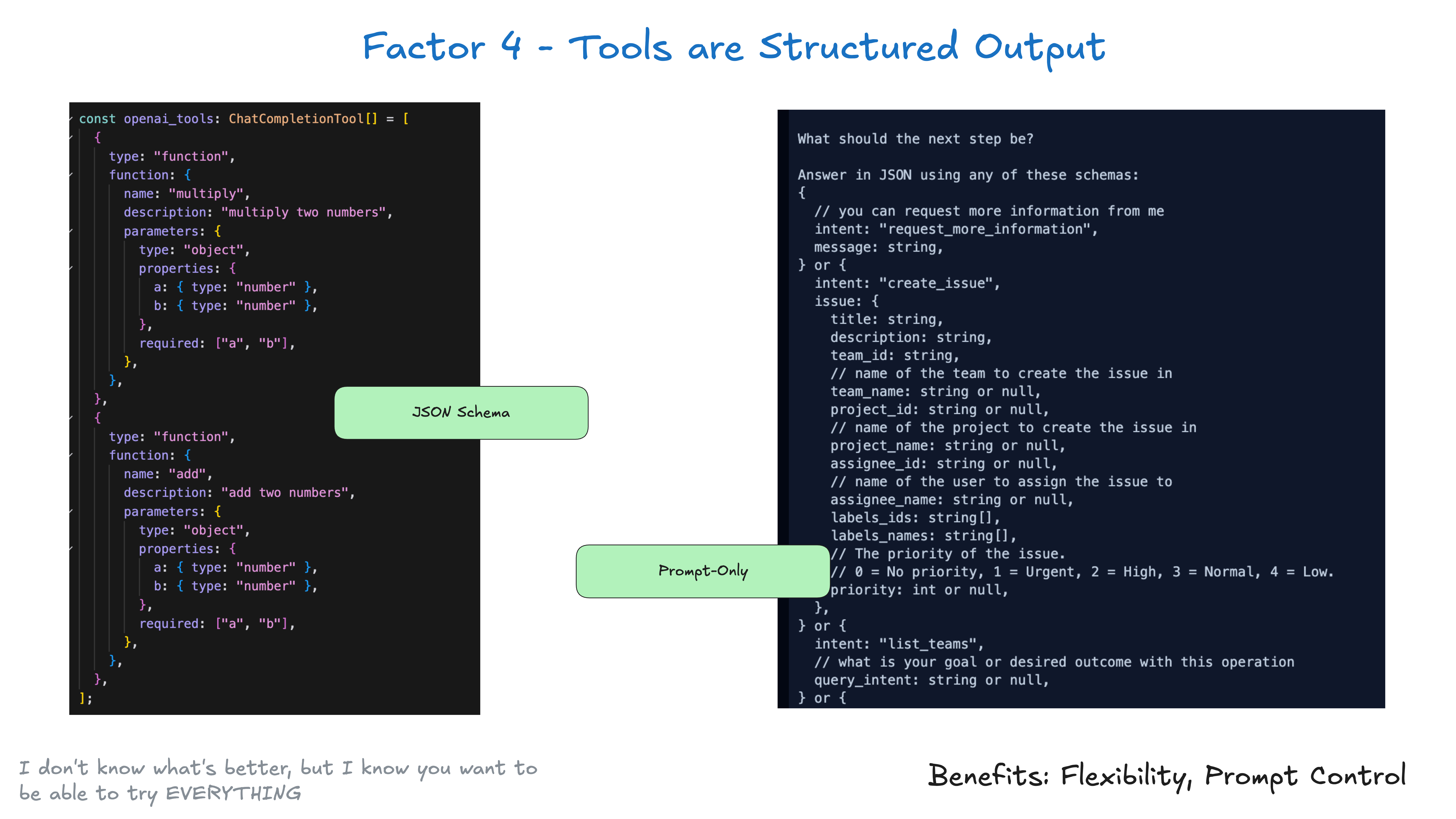

## Factor 4: Tools Are Structured Outputs

|

||||

|

||||

This chapter demonstrates that tools are just structured JSON outputs from the LLM - nothing more complex!

|

||||

|

||||

📖 **Learn more**: [Factor 4: Tools Are Structured Outputs](https://github.com/humanlayer/12-factor-agents/blob/main/content/factor-04-tools-are-structured-outputs.md)

|

||||

|

||||

|

||||

|

||||

- fetch_file: {src: ./walkthrough/02-tool_calculator.baml, dest: baml_src/tool_calculator.baml}

|

||||

- command: "!ls baml_src"

|

||||

- text: |

|

||||

@@ -133,6 +151,20 @@ sections:

|

||||

- Each tool result is fed back to the agent

|

||||

- The agent continues until it has a final answer

|

||||

|

||||

## The Agent Loop Pattern

|

||||

|

||||

We're implementing the core agent loop - where the AI makes decisions, executes tools, and continues until done.

|

||||

|

||||

|

||||

|

||||

## Factor 5: Unify Execution State

|

||||

|

||||

Notice how we're storing everything as events in our Thread - this is Factor 5 in action!

|

||||

|

||||

📖 **Learn more**: [Factor 5: Unify Execution State](https://github.com/humanlayer/12-factor-agents/blob/main/content/factor-05-unify-execution-state.md)

|

||||

|

||||

|

||||

|

||||

Let's update our agent to handle tool calls properly:

|

||||

- file: {src: ./walkthrough/03-agent.py}

|

||||

- text: |

|

||||

@@ -141,10 +173,6 @@ sections:

|

||||

- text: |

|

||||

Let's try it out! The agent should now call the tool and return the calculated result:

|

||||

- run_main: {regenerate_baml: false, args: "can you add 3 and 4"}

|

||||

- text: |

|

||||

you can run with baml_logs enabled to see how the prompt changed when we added the New

|

||||

tool types to our union of response types.

|

||||

- run_main: {regenerate_baml: false, args: "can you add 3 and 4", show_logs: true}

|

||||

- text: |

|

||||

You should see the agent:

|

||||

1. Recognize it needs to use the add tool

|

||||

@@ -299,16 +327,9 @@ sections:

|

||||

- text: |

|

||||

Now let's test it with a simple calculation to see the reasoning in action:

|

||||

|

||||

**Note:** The BAML logs below will show the model's reasoning steps. Look for the `<reasoning>` tags in the logs to see how the model thinks through the problem before deciding what to do.

|

||||

- run_main: {args: "can you multiply 3 and 4", show_logs: true}

|

||||

- run_main: {args: "can you multiply 3 and 4"}

|

||||

- text: |

|

||||

You should see the reasoning steps in the BAML logs above. The model explicitly thinks through what it needs to do before making a decision.

|

||||

|

||||

💡 **Tip:** If you want to see BAML logs for any other calls in this notebook, you can use the `run_with_baml_logs` helper function:

|

||||

```python

|

||||

# Instead of: main("your message")

|

||||

# Use: run_with_baml_logs(main, "your message")

|

||||

```

|

||||

The model uses explicit reasoning steps to think through the problem before making a decision.

|

||||

|

||||

## Advanced Prompt Engineering

|

||||

|

||||

|

||||

@@ -1,9 +1,13 @@

|

||||

# Agent implementation with clarification support

|

||||

import json

|

||||

|

||||

def agent_loop(thread, clarification_handler):

|

||||

"""Run the agent loop until we get a final answer."""

|

||||

while True:

|

||||

def agent_loop(thread, clarification_handler, max_iterations=3):

|

||||

"""Run the agent loop until we get a final answer (max 3 iterations)."""

|

||||

iteration_count = 0

|

||||

while iteration_count < max_iterations:

|

||||

iteration_count += 1

|

||||

print(f"🔄 Agent loop iteration {iteration_count}/{max_iterations}")

|

||||

|

||||

# Get the client

|

||||

baml_client = get_baml_client()

|

||||

|

||||

@@ -64,6 +68,9 @@ def agent_loop(thread, clarification_handler):

|

||||

else:

|

||||

return "Error: Unexpected result type"

|

||||

|

||||

# If we've reached max iterations without a final answer

|

||||

return f"Agent reached maximum iterations ({max_iterations}) without completing the task."

|

||||

|

||||

class Thread:

|

||||

"""Simple thread to track conversation history."""

|

||||

def __init__(self, events):

|

||||

|

||||

@@ -83,86 +83,6 @@ def get_baml_client():

|

||||

init_code = "!baml-cli init"

|

||||

nb.cells.append(new_code_cell(init_code))

|

||||

|

||||

# Fourth cell: Add BAML logging helper

|

||||

logging_helper = '''# Helper function to capture BAML logs in notebook output

|

||||

import os

|

||||

import sys

|

||||

from IPython.utils.capture import capture_output

|

||||

import contextlib

|

||||

|

||||

def run_with_baml_logs(func, *args, **kwargs):

|

||||

"""Run a function and capture BAML logs in the notebook output."""

|

||||

# Ensure BAML_LOG is set

|

||||

if 'BAML_LOG' not in os.environ:

|

||||

os.environ['BAML_LOG'] = 'info'

|

||||

|

||||

print(f"Running with BAML_LOG={os.environ.get('BAML_LOG')}...")

|

||||

|

||||

# Capture both stdout and stderr

|

||||

with capture_output() as captured:

|

||||

result = func(*args, **kwargs)

|

||||

|

||||

# Display the result first

|

||||

if result is not None:

|

||||

print("=== Result ===")

|

||||

print(result)

|

||||

|

||||

# Display captured stdout if any

|

||||

if captured.stdout:

|

||||

print("\\n=== Output ===")

|

||||

print(captured.stdout)

|

||||

|

||||

# Display BAML logs from stderr

|

||||

if captured.stderr:

|

||||

print("\\n=== BAML Logs ===")

|

||||

# Format the logs for better readability

|

||||

log_lines = captured.stderr.strip().split('\\n')

|

||||

for line in log_lines:

|

||||

if 'reasoning' in line.lower() or '<reasoning>' in line:

|

||||

print(f"🤔 {line}")

|

||||

elif 'error' in line.lower():

|

||||

print(f"❌ {line}")

|

||||

elif 'warn' in line.lower():

|

||||

print(f"⚠️ {line}")

|

||||

else:

|

||||

print(f" {line}")

|

||||

|

||||

return result

|

||||

|

||||

# Alternative: Force stderr to stdout redirection

|

||||

@contextlib.contextmanager

|

||||

def redirect_stderr_to_stdout():

|

||||

"""Context manager to redirect stderr to stdout."""

|

||||

old_stderr = sys.stderr

|

||||

sys.stderr = sys.stdout

|

||||

try:

|

||||

yield

|

||||

finally:

|

||||

sys.stderr = old_stderr

|

||||

|

||||

def run_with_baml_logs_redirect(func, *args, **kwargs):

|

||||

"""Run a function with stderr redirected to stdout for immediate display."""

|

||||

if 'BAML_LOG' not in os.environ:

|

||||

os.environ['BAML_LOG'] = 'info'

|

||||

|

||||

print(f"Running with BAML_LOG={os.environ.get('BAML_LOG')} (stderr→stdout)...")

|

||||

|

||||

with redirect_stderr_to_stdout():

|

||||

result = func(*args, **kwargs)

|

||||

|

||||

if result is not None:

|

||||

print("\\n=== Result ===")

|

||||

print(result)

|

||||

|

||||

return result

|

||||

|

||||

# Set BAML log level (options: error, warn, info, debug, trace)

|

||||

os.environ['BAML_LOG'] = 'info'

|

||||

print("BAML logging helpers loaded!")

|

||||

print("- Use run_with_baml_logs() to capture and display logs after execution")

|

||||

print("- Use run_with_baml_logs_redirect() to see logs in real-time as they're generated")

|

||||

'''

|

||||

nb.cells.append(new_code_cell(logging_helper))

|

||||

|

||||

def process_step(nb, step, base_path, current_functions, section_name=None):

|

||||

"""Process different step types."""

|

||||

@@ -244,17 +164,7 @@ def process_step(nb, step, base_path, current_functions, section_name=None):

|

||||

else:

|

||||

main_call = "main()"

|

||||

|

||||

# Check if we should use logging wrapper

|

||||

use_logging = step['run_main'].get('show_logs', False)

|

||||

|

||||

if use_logging:

|

||||

# Use logging wrapper

|

||||

if call_parts:

|

||||

nb.cells.append(new_code_cell(f'run_with_baml_logs(main, {", ".join(call_parts)})'))

|

||||

else:

|

||||

nb.cells.append(new_code_cell('run_with_baml_logs(main)'))

|

||||

else:

|

||||

# Normal execution without logging

|

||||

# Execute the main function call

|

||||

nb.cells.append(new_code_cell(main_call))

|

||||

|

||||

def convert_walkthrough_to_notebook(yaml_path, output_path):

|

||||

|

||||

@@ -2,7 +2,7 @@

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "7c856804",

|

||||

"id": "a55820ee",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"# Building the 12-factor agent template from scratch in Python"

|

||||

@@ -10,7 +10,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "6c96065f",

|

||||

"id": "ba52e30a",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Steps to start from a bare Python repo and build up a 12-factor agent. This walkthrough will guide you through creating a Python agent that follows the 12-factor methodology with BAML."

|

||||

@@ -18,7 +18,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "d8a45720",

|

||||

"id": "75b26c9b",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## Chapter 0 - Hello World"

|

||||

@@ -26,7 +26,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "a7a5467e",

|

||||

"id": "fa4b9e07",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Let's start with a basic Python setup and a hello world program."

|

||||

@@ -34,7 +34,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "563ef643",

|

||||

"id": "4e464227",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"This guide will walk you through building agents in Python with BAML.\n",

|

||||

@@ -46,7 +46,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "7db47ab2",

|

||||

"id": "99dac1bb",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Here's our simple hello world program:"

|

||||

@@ -55,7 +55,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "c9cc0758",

|

||||

"id": "9c6946fd",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -69,7 +69,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "5b920391",

|

||||

"id": "5523efac",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Let's run it to verify it works:"

|

||||

@@ -78,7 +78,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "29ba0259",

|

||||

"id": "6a437eb2",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -87,7 +87,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "26398377",

|

||||

"id": "d9aa0df6",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## Chapter 1 - CLI and Agent Loop"

|

||||

@@ -95,7 +95,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "0b666a9e",

|

||||

"id": "970c65da",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Now let's add BAML and create our first agent with a CLI interface."

|

||||

@@ -103,7 +103,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "a6191d3c",

|

||||

"id": "976a0fca",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"In this chapter, we'll integrate BAML to create an AI agent that can respond to user input.\n",

|

||||

@@ -140,7 +140,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "e44cf54f",

|

||||

"id": "ba1f7191",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"### BAML Setup\n",

|

||||

@@ -154,7 +154,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "f323b5b9",

|

||||

"id": "9910f8a3",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -164,7 +164,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "e9424fab",

|

||||

"id": "a4ad6e77",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -224,7 +224,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "b34a99bc",

|

||||

"id": "b99ba982",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -234,39 +234,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "8a2812f6",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# Helper function to capture BAML logs in notebook output\n",

|

||||

"import os\n",

|

||||

"from IPython.utils.capture import capture_output\n",

|

||||

"\n",

|

||||

"def run_with_baml_logs(func, *args, **kwargs):\n",

|

||||

" \"\"\"Run a function and capture BAML logs in the notebook output.\"\"\"\n",

|

||||

" # Capture both stdout and stderr\n",

|

||||

" with capture_output() as captured:\n",

|

||||

" result = func(*args, **kwargs)\n",

|

||||

" \n",

|

||||

" # Display the captured output\n",

|

||||

" if captured.stdout:\n",

|

||||

" print(captured.stdout)\n",

|

||||

" if captured.stderr:\n",

|

||||

" # BAML logs go to stderr - format them nicely\n",

|

||||

" print(\"\\n=== BAML Logs ===\")\n",

|

||||

" print(captured.stderr)\n",

|

||||

" print(\"=================\\n\")\n",

|

||||

" \n",

|

||||

" return result\n",

|

||||

"\n",

|

||||

"# Set BAML log level (options: error, warn, info, debug, trace)\n",

|

||||

"os.environ['BAML_LOG'] = 'info'\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "d7efec52",

|

||||

"id": "ee716f3a",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -275,7 +243,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "eaa41eda",

|

||||

"id": "894474da",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Now let's create our agent that will use BAML to process user input.\n",

|

||||

@@ -286,7 +254,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "6048a2f5",

|

||||

"id": "dbf9d929",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -321,7 +289,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "88143079",

|

||||

"id": "b9421cd4",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Next, we need to define the BAML function that our agent will use.\n",

|

||||

@@ -339,7 +307,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "ee4a5f17",

|

||||

"id": "58d8bda5",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -349,7 +317,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "47435e42",

|

||||

"id": "1edc5279",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -358,7 +326,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "83a9feee",

|

||||

"id": "ee489cc1",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Now let's create our main function that accepts a message parameter:\n"

|

||||

@@ -367,7 +335,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "1231c8fc",

|

||||

"id": "f4fea69e",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -383,7 +351,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "2ddea81d",

|

||||

"id": "fe3fd9c7",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Let's test our agent! Try calling main() with different messages:\n",

|

||||

@@ -391,16 +359,16 @@

|

||||

"- `main(\"Tell me a joke\")`\n",

|

||||

"- `main(\"How are you doing today?\")`\n",

|

||||

"\n",

|

||||

"in this case, we'll use the baml_generate function to \n",

|

||||

"generate the pydantic and python bindings from our \n",

|

||||

"baml source, but in the future we'll skip this step as it \n",

|

||||

"is done automatically by the get_baml_client() function \n"

|

||||

"in this case, we'll use the baml_generate function to\n",

|

||||

"generate the pydantic and python bindings from our\n",

|

||||

"baml source, but in the future we'll skip this step as it\n",

|

||||

"is done automatically by the get_baml_client() function\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "c3523c76",

|

||||

"id": "7fc1ee38",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -410,7 +378,7 @@

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "02f16835",

|

||||

"id": "8756df71",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

@@ -419,34 +387,13 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "e0e5c359",

|

||||

"id": "9b5ca88c",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"In a few cases, we'll enable the baml debug logs to see the inputs/outputs to and from the model.\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "e7f1d260",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"run_with_baml_logs(main, \"Hello from the Python notebook!\")"

|

||||

]

|

||||

"source": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "c1323d34",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"what's most important there is that you can see the prompt and how the output_format is injected\n",

|

||||

"to tell the model what kind of json we want to return.\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "dba3ff7f",

|

||||

"id": "e79f4d84",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## Chapter 2 - Add Calculator Tools"

|

||||

@@ -454,7 +401,7 @@

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "83fd4e9e",

|

||||

"id": "4659d5ef",

|

||||

"metadata": {},

|